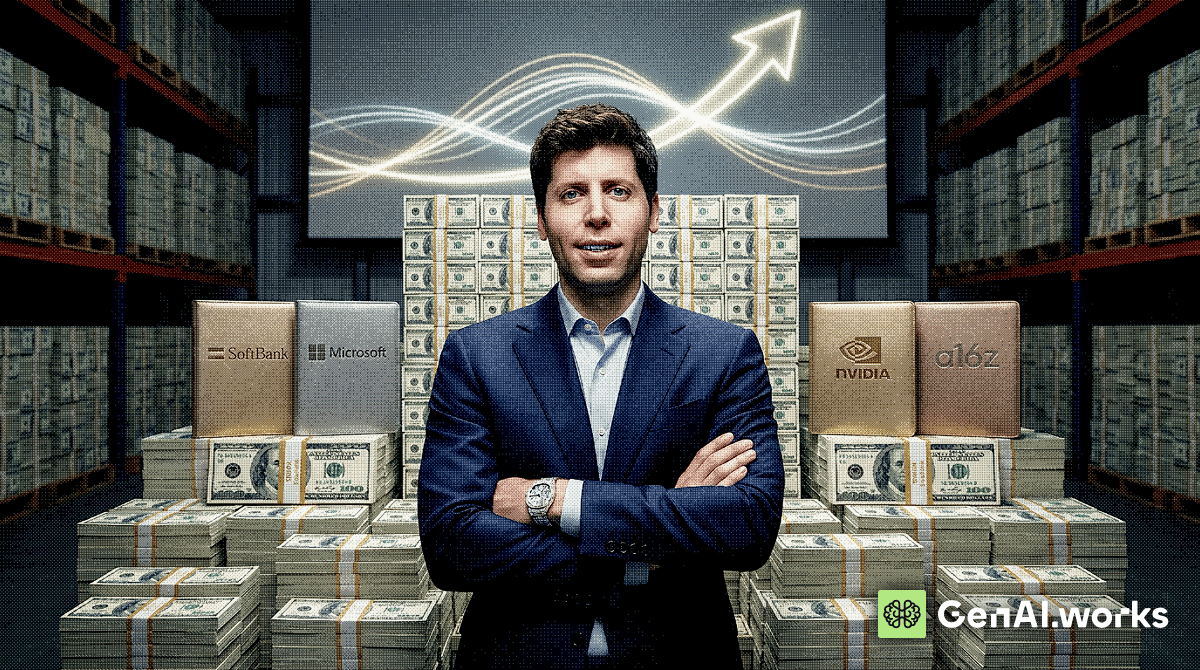

OpenAI closed a $122B funding round at an $852B valuation, the largest single private fundraise in venture history. Who paid: SoftBank co-led alongside a16z, D.E. Shaw Ventures, MGX and TPG. Amazon committed up to $50B, Nvidia $30B and SoftBank secured a $40B bridge loan to cover its share. Microsoft also participated. Around $3B came from retail investors through bank channels for the first time.

The numbers underneath: OpenAI says it generates $2B per month in revenue and claims 900M+ weekly active users on ChatGPT. Enterprise now accounts for over 40% of revenue, up from around 30% last year, and is on track to reach parity with consumer by end of 2026. What they're building: A unified "AI superapp" merging ChatGPT, Codex and its agentic tools into a single interface. This follows the deliberate wind-down of the Sora video app. IPO groundwork: ARK Invest will include OpenAI in several ETFs, giving retail investors exposure ahead of a widely expected IPO, possibly as early as Q4 2026. The company also expanded its revolving credit facility to $4.7B (currently undrawn). The enterprise revenue trajectory matters more than the headline number. Over 40% of revenue and climbing fast means OpenAI's decision to drop its consumer experiments and consolidate around developer and business tools was a bet on where the money was already heading.

The $122B is the fuel. The superapp is the vehicle. The IPO is the destination.

You want reliable AI answers every day DIY RAG sounds tempting, until you are sourcing data from everywhere, managing security rules, fixing broken pipelines, etc. It’s like building a kitchen instead of serving meals. Progress Agentic RAG-as-a-Service changes that. Approved data.

Built-in governance. Consistent results. No messy middle, just answers you can trust. Instead of managing infrastructure, you can focus on leveraging the power of AI. Skip building the kitchen.

Start serving AI. Fast.

Try it now GenAI Works Academy is now live Practical courses on ChatGPT, Claude, Cursor, Perplexity, Deep Seek and more, taught by instructors who actually use them in production. Whether you want to build faster, automate your work or figure out which tools are worth your time, there's a course for it. What's inside: Prompt engineering for professionals Claude Code from zero to shipping AI-powered marketing, data science and business strategy Beginner to advanced tracks across every major platform No filler. No theory-only lectures.

Just the skills that are actually moving careers forward right now. Explore GenAI Academy → Anthropic: Claude Code's Source Code Is Now Everyone's Business So Anthropic accidentally shipped a 59.8MB source map file in Claude Code version 2.1.88 on npm, exposing the full TypeScript codebase: roughly 1,900 files and 512,000+ lines of code. A security researcher flagged it on X within hours. By the time Anthropic pulled the package, the code had been mirrored across GitHub with tens of thousands of stars and forks.

This is Anthropic's second major leak in under a week, following the accidental exposure of details about its unreleased Mythos model through a misconfigured CMS. What the code reveals: A sophisticated three-layer memory architecture for managing long-session context, a persistent background agent codenamed KAIROS that can act autonomously without user prompting, a 30-minute deep-planning system called ULTRAPLAN running on remote Opus 4.6 instances, multi-agent coordination and 44 feature flags for unreleased capabilities. The security problem: The leaked code maps out exactly how Claude Code handles permissions, agent orchestration and security guardrails. Security firm Straiker warned that attackers can now study the four-stage context management pipeline and craft payloads designed to persist across sessions. Worse still, a separate supply chain attack on the axios npm package hit within hours of the leak.

Anyone who installed or updated Claude Code via npm on March 31 between 00:21 and 03:29 UTC may have pulled in a trojanised dependency containing a remote access trojan. The copyright question: The leaked code includes "Undercover Mode," a system that actively hides AI authorship from commits to public repositories, stripping Co-Authored-By attribution and instructing Claude to never mention it is an AI. Anthropic has publicly acknowledged that Claude Code built significant portions of its own products. Under current US copyright law, following the Supreme Court's March 2025 decision declining to extend copyright to AI-generated works, the enforceability of Anthropic's IP claims over AI-authored code is genuinely unclear. Competitors studying the leaked source may be looking at material that is legally unprotectable.

Anthropic's response: The company confirmed the leak, calling it a "release packaging issue caused by human error, not a security breach" with no customer data exposed. The irony has not been lost on the community: a system built specifically to prevent leaks (Undercover Mode) leaked alongside everything else it was designed to protect. If you use Claude Code via npm, migrate to the native installer immediately and rotate your API keys. ARC-AGI-3: The Video Game Test That Broke Every AI Model The ARC Prize Foundation released ARC-AGI-3 , a new interactive reasoning benchmark that dropped every frontier model below 1%. Humans solve 100% of the environments on their first try.

How it works: Instead of static puzzles, agents are dropped into video game-like environments with no instructions, no stated goals and no training data. They have to figure out the rules, discover objectives and execute a strategy entirely through interaction. Scoring uses a squared efficiency penalty against human baselines, which blocks brute-force approaches. The scores: Gemini 3.1 Pro led at 0.37%. GPT-5.4 scored 0.26%. Opus 4.6 managed 0.25%. Grok 4.20 scored 0%. Why it matters: The previous ARC-AGI-2 benchmark saw models climb from single digits to over 50% as labs optimised for it.

Gemini 3.1 Pro hit 77% on that version. ARC-AGI-3 reset the board entirely because it tests something different: the ability to learn from scratch in an unfamiliar environment rather than recalling patterns from training data. A $2M prize pool backs the challenge, with a $700K grand prize for human-level performance. ARC creator Francois Chollet's point is blunt: if a system needs hand-crafted prompts and custom scaffolding to handle a new task, the intelligence is in the scaffolding, not the model.

Whether you agree or not, the gap between 77% on a known format and 0.25% on an unfamiliar one says something real about the distance between pattern matching and adaptive reasoning . Quinnipiac Poll: Americans Use AI More, Trust It Less EA Quinnipiac University poll of nearly 1,400 US adults found that AI adoption is climbing while trust, optimism and job confidence are all moving in the opposite direction. Usage up: 51% now use AI for research (up from 37% in April 2025). 28% use it for writing. 27% for work or school projects. Only 27% say they have never used an AI tool, down from 33%. Trust flat to falling: 76% trust AI-generated information rarely or only sometimes. Just 21% trust it most or almost all of the time.

These numbers are largely unchanged from last year, meaning a year of additional usage did not build trust. Job anxiety spiking: 70% expect AI to reduce job opportunities, up from 56% a year ago. Gen Z is the most pessimistic cohort at 81%, despite being the most frequent users. Among employed Americans, 30% are concerned AI will make their specific job obsolete, up from 21%. Harm vs good: 55% say AI will do more harm than good in daily life, up from 44%. In education, that figure hits 64%. Only 15% would be willing to have an AI as their direct supervisor.

The most striking finding: usage and trust are moving in opposite directions. More exposure is producing more scepticism, not less. That pattern, combined with 80% of respondents saying they are concerned about AI and 74% saying the government is not doing enough to regulate it, is the kind of data that eventually shapes policy. Tool of the Day: Softr Softr just launched its AI Co-Builder, a no-code platform that turns plain language descriptions into production-ready business software.

Go to softr.io and sign up for free Describe the app you need in plain language (e.g. "a client portal with project tracking and invoicing") The Co-Builder generates your database, UI, permissions and business logic in one shot Edit visually or keep prompting the AI to iterate Connect your existing data from Airtable, Google Sheets, Notion, HubSpot or 15+ other sources Unlike vibe-coding tools that stop at the prototype, Softr builds on five years of pre-built infrastructure so authentication, roles and workflow automation work out of the box. Used by over a million builders at Netflix, Google and Stripe. Try it yourself: softr.io Light Bytes Google Veo 3.1 Lite: New budget video generation model for developers at half the cost of its Fast variant, generating clips up to 8 seconds.

Mercor breach: AI recruiting startup hit by a supply chain attack through the open-source LiteLLM project. Hacking group Lapsus$ claims access to stolen data. Salesforce Slackbot upgrade: 30 new agent capabilities added to Slack, including reusable skills, MCP connections and desktop operation.